The Research I Can’t Stop Thinking About

I spend a lot of time reading research. Probably more than is strictly healthy, to be honest. Reports, surveys, white papers, quarterly updates from every consultancy and think tank that publishes one. I have a folder on my desktop called “To Read” that I’m fairly sure qualifies as a fire hazard at this point. Most of it confirms what I’m already seeing with clients and audiences, some of it surprises me, and every now and then, something is so head-tiltingly good that I can’t stop thinking about it.

This month, that something was Pew Research Center’s latest roundup of how Americans actually feel about AI.

Not how tech leaders feel about it or how investors feel about it, but how regular people, your employees, your customers, your neighbors, actually feel about the technology that’s reshaping how we all work and live.

Here was the headline number: half of U.S. adults say the increased use of AI in daily life makes them more concerned than excited. Only 10% say they’re more excited than concerned. And the part that should matter most to leaders is that the concern isn’t new. It’s been climbing steadily since 2021.

Let that land for a moment. We’ve had three years of AI breakthroughs, product launches, and breathless headlines about how AI is going to change everything … and the public response has been to get more worried, not less.

People aren’t afraid of AI. They’re afraid of AI with no one visibly at the wheel.

So, Why Is the Worry Growing?

It would be easy to blame the media, or sci-fi movies, or that one uncle who thinks ChatGPT is going to become sentient. But I think the real answer is simpler than any of that.

Most organizations haven’t told anyone what they believe about AI. And when there’s silence where clarity should be, people fill the gap with worry.

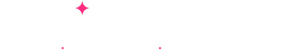

Just Capital published research today and one number really stood out to me was that only 37% of the 110 major companies they analyzed have published responsible AI principles or guidelines. Thirty-seven percent. I actually said that number out loud to make sure I’d read it correctly. (I had.) Which means nearly two-thirds of large organizations are deploying AI without ever articulating, publicly or internally, what they stand for when it comes to this technology.

And the Thales Digital Trust Index 2026 shows where that gap is landing. While 93% of IT leaders say they’re already using or planning AI initiatives, only 23% of consumers trust companies to use AI responsibly with their data.

That’s a 70-point gap between adoption and trust. Seventy points (which, if that were a restaurant-health score, we’d all be taking our lives in our own hands!). I think that represents a number of challenges, communication being one of the biggest. More than anything, however, I think it’s a leadership opportunity that most organizations haven’t picked up yet.

The Silence Is Saying Something (Even When You Don’t Mean It To)

This is the part that I find myself talking about most with leadership teams. When your organization hasn’t said anything about what it believes about AI, it hasn’t actually said nothing. The absence of a clear position sends its own message. Employees read it as “proceed with caution, but don’t talk about it.” Customers sense that something’s changed but can’t quite see what. And the market assumes you’re figuring it out as you go … which, to be fair, most of us are. The difference is whether you’re figuring it out with intention or just hoping for the best.

I wrote a while back about AI guilt, that nagging feeling people get when they use AI but aren’t sure if they should, or how much, or whether they need to tell anyone. That guilt doesn’t come from the technology. It comes from the absence of clarity. When there are no principles, every single AI decision becomes a personal judgment call. Should I use it for this email? Is it OK to use it for this client proposal? What if someone finds out? Sound familiar?

That uncertainty weighs on people more than most leaders realize. And the good news is, it’s one of the most fixable problems in AI adoption right now.

The Pew data backs this up beautifully. They found that more than half of both the public and AI experts say they want more control over how AI shows up in their lives. People aren’t saying “take it away.” They’re saying “tell me what’s going on.” Give me some visibility. Give me a say. Give me a reason to trust you.

The fastest way to build AI confidence in your team? Tell them what you believe first.

Three Things Your People Need to Hear From You

I talk about this with organizations regularly as part of my Second Story Strategy, and the framework I share is deceptively simple. There are three layers stacked in a pyramid. Principles, policies, and playbooks. (Oh … how I do love an alliterative framework!) Each one does something the others can’t.

Your principles are your beliefs. They’re the short, clear statements about what your organization stands for when it comes to AI. Not a 40-page legal document that lives in a drawer. Something a new hire could read in five minutes and actually understand. We use AI to enhance our work, not replace our thinking. We’re transparent when AI is part of our process. We don’t use AI for decisions that require human empathy and judgment. Simple stuff, right? You’d be amazed how much relief it creates when people finally see it written down.

The key here is that principles are the foundation. They are the lens we (and our teams) can use to evaluate every AI decision and action.

And this matters more than most leaders realize. The Just Capital research found that safety and security is the number one AI concern for corporate leaders, investors, and the public alike, with roughly half of each group ranking it highest. Your principles are how you show people you take that seriously.

Your policies make those beliefs operational. They turn “we believe in transparency” into “here’s specifically what we do and don’t do.” What tools are approved? What data can and can’t go into AI systems? Who’s responsible when something goes wrong? Policies are where the gray areas get addressed, and in my experience, it’s the gray areas that keep people paralyzed. (If you’ve ever watched someone hover over the “send” button wondering whether it’s OK that AI helped draft it … that’s a policy problem, not a people problem.)

Pew found that 65% of American workers still say they don’t use AI much or at all in their job. Sixty-five percent! I’d bet a significant chunk of that isn’t people avoiding AI because they don’t see the value. They’re waiting for someone to tell them how it fits into how they work. In other words, they’re ready … they just need the green light. A clear policy gives them that.

Your playbooks make it practical. This is where it gets role-specific. A playbook for your sales team will look different from a playbook for your customer service team, which will look different from one for your marketing department. Each one answers the questions your team is actually asking. In this role, where does AI add value, where does the human stay in the lead, and what does “good” look like? If you’ve read my piece on the Delegation Dial, this is where that thinking comes to life. The dial gives your team a way to make those calls consistently instead of guessing every time.

• • •

Your Homework

I know that building all three layers sounds like a big project, and eventually it will be. But you don’t need to do it all at once. (Promise.) I’d start here.

Write your principles first. Just a page. Maybe less. What does your organization believe about AI? What will you always do? What will you never do? It doesn’t need to be perfect. A draft that exists beats a masterpiece that doesn’t. Share it with your team and invite their input. You might be surprised how much clarity even a conversation about principles creates. I’ve seen teams visibly relax just from the act of talking about it together.

Then pick one workflow and build its playbook. The workflow where AI questions come up most often. Walk through it and ask yourself where AI fits, where it doesn’t, and what the human owns. Write that down. Congratulations, you now have something people can actually follow. That puts you ahead of roughly 63% of major companies, according to the research we just talked about. How novel!

The organizations building trust aren’t the ones with the best AI tools. They’re the ones who’ve said out loud what they stand for.

The Trust Advantage

Pew’s data shows us that concern about AI is growing. Just Capital shows us that most companies haven’t addressed it. The Thales research shows us that consumers don’t trust companies to handle AI responsibly. And the Zamplia consumer survey from earlier this year found that 61% of consumers are more likely to do business with brands that clearly explain how they use AI.

Put all of that together and the picture is clear. Transparency isn’t just the right thing to do … it’s a genuine competitive advantage. The organizations that take the time to define what they believe, say it out loud, and build systems to back it up are earning trust at exactly the moment when trust matters most. And the good news? The bar is still low enough that even a strong first step puts you well ahead.

If defining what your organization believes about AI sounds like a conversation worth having, I’d love to help you start it. It’s one of my favorite things to work through with leadership teams. There’s a moment in every session where someone says “oh, is that all we needed to do?” and the answer is usually yes, that was a really good place to start. Let’s get you to that moment.

Frequently Asked Questions

Why are Americans increasingly concerned about AI?

Half of U.S. adults say AI in daily life makes them more concerned than excited, according to Pew Research Center, up from 37% in 2021. The concern stems not from the technology itself but from a lack of transparency, control, and clear communication from the organizations deploying it. People want to understand how AI is being used in their lives and have a meaningful say in the process.

As AI has become more visible in workplaces, customer interactions, and daily routines, the absence of clear guidelines from organizations has left people filling the gap with uncertainty. When companies stay silent about their AI use, people tend to assume the worst.

What are AI principles and why does every organization need them?

AI principles are short, clear statements about what an organization believes when it comes to using AI. They answer foundational questions like what you will always do, what you will never do, and where the human stays in charge. They provide the clarity that prevents every AI decision from becoming a personal judgment call for individual employees.

Without principles, teams either avoid AI entirely or use it inconsistently, both of which create risk. Principles don’t need to be complex. A single page that a new hire could read and understand is a strong starting point, and it’s far better than the silence most organizations are currently offering.

What is the AI trust gap?

The AI trust gap is the growing disconnect between how aggressively organizations are adopting AI and how little trust the public has in how they’re doing it. Research from the Thales Digital Trust Index 2026 found that 93% of IT leaders are using or planning AI, while only 23% of consumers trust companies to use AI responsibly with their data. That 70-point gap represents a significant business risk.

Closing the trust gap requires more than better technology. It requires transparency about how AI is being used, clear principles that guide decision-making, and visible accountability structures that give stakeholders confidence.

How many companies have published AI guidelines?

According to Just Capital’s 2026 analysis, only 37% of the 110 major companies studied have disclosed responsible AI principles or guidelines. This means nearly two-thirds of large organizations are deploying AI without publicly articulating what they believe about how it should and shouldn’t be used.

This gap is especially notable given that safety and security ranks as the top AI concern among corporate leaders, investors, and the general public. Organizations that have defined their principles are in a stronger position to build trust with all three groups.

What is the difference between AI principles, policies, and playbooks?

Principles define what your organization believes about AI. They’re your values and commitments in plain language. Policies make those beliefs operational by specifying what’s approved, what’s off-limits, and who decides the gray areas. Playbooks make it practical by showing people in specific roles exactly how AI fits into their work, where the human stays in the lead, and what good looks like.

Each layer does something the others can’t. Principles without policies are just intentions. Policies without playbooks leave teams guessing about implementation. The three work together to give an organization consistent, confident AI adoption.

Why aren’t more employees using AI at work?

Pew Research found that 65% of American workers say they don’t use AI much or at all in their jobs. While some of this reflects roles where AI isn’t yet relevant, a significant portion comes from uncertainty. When organizations haven’t communicated clear expectations about AI use, employees often default to avoidance rather than risk doing something wrong.

Clear policies and playbooks address this directly. When people know what’s encouraged, what’s expected, and how AI fits into their specific workflows, adoption follows naturally. The barrier for most teams isn’t capability or access. It’s permission and clarity.

How does AI transparency build customer trust?

A 2026 Zamplia consumer survey found that 61% of consumers are more likely to do business with brands that clearly explain how they use AI. Transparency gives customers the information they need to make informed decisions, and it signals that an organization respects their concerns enough to address them directly.

Conversely, AI use that’s discovered rather than disclosed erodes trust quickly. Being upfront about where AI is involved, and where humans remain in control, builds the kind of confidence that strengthens customer relationships over time.

What should a business leader do first about AI trust?

Start by writing your AI principles. Not a comprehensive governance framework, just a clear, honest statement of what your organization believes about AI. What you’ll always do, what you’ll never do, and where the human stays in charge. Share it with your team and invite feedback.

Then pick one workflow where AI questions come up frequently and build a simple playbook for how AI fits into that process. These two steps create immediate clarity and give your team something concrete to follow while you build out the rest over time.

What is the Delegation Dial?

The Delegation Dial is a practical framework for deciding when to lean into AI and when to stay human. It helps leaders and teams evaluate interactions based on the emotional stakes and complexity involved, rather than defaulting to “automate everything” or “keep everything human.”

For tasks that are routine, high-volume, and low-stakes emotionally, AI often adds significant value. For moments that require empathy, judgment, nuance, or relationship-building, the human needs to stay in the lead. The Delegation Dial gives teams a consistent way to make those calls rather than guessing each time.

How do AI principles connect to competitive advantage?

Organizations that have defined and communicated their AI principles are building trust at a time when trust is scarce. With only 37% of major companies disclosing AI guidelines and only 23% of consumers trusting companies to use AI responsibly, the bar is remarkably low. Organizations that clear it stand out immediately.

Beyond trust, principles create internal alignment that accelerates adoption. When everyone understands what the organization believes about AI, decisions happen faster, experimentation becomes safer, and the team moves together rather than in scattered directions. That coherence is a competitive advantage that tools alone can’t create.