You Bought the Ingredients. Who’s Making Dinner?

I had a call recently with a senior leadership team at a $1B+ property management and development company. A big organization. Serious about AI. I was impressed (and excited) that they’d purchased enterprise ChatGPT licenses for every employee. This was thousands of people, a full company-wide rollout. It was, on paper, a significant commitment.

When I asked how it was going, there was a pause. It was one of those pauses where you can hear someone deciding how honest to be.

“Honestly? We’re not really sure anyone’s using it.”

The CIO went on to tell me that no training had been provided … not even a recorded video course. No guidance on what “good” looked like for their teams. No playbooks, no principles, no time carved into anyone’s week to actually experiment and learn. They had handed every employee a fully stocked kitchen and assumed dinner would magically appear.

(It did not appear. It rarely does, much to my dismay.)

I understand the instinct completely. The pressure to move fast on AI is real, and buying the licenses feels like moving. It’s visible, it’s quantifiable, and it makes a great line in a board update. But there’s a meaningful difference between giving people access to a tool and actually activating that tool across a workforce, and that difference is where a surprising amount of value quietly disappears.

Let’s be totally honest, though: this isn’t just a billion-dollar company problem. I hear versions of this story from solo realtors who downloaded ChatGPT six months ago and have opened it twice, and from a regional sales director who sent her team a link to an AI tool with a “check this out!” email and never mentioned it again. The scale is different, but the gap is identical.

Access is a procurement decision. Activation is a leadership responsibility. And right now, most organizations are very, very good at the first one.

What the Numbers Actually Say

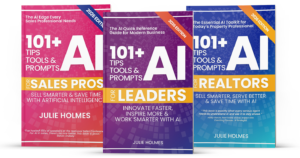

I’ve been running an AI Readiness Assessment with leaders across industries for the past couple of years, and the data, across more than 350 responses, tells a story that is equal parts encouraging and clarifying.

Eighty-eight percent of leaders believe AI will significantly impact or outright transform their industry, while only 25% are using it meaningfully across their organization. That is a 63-point gap between conviction and action, and I call this the access-activation gap. The belief is there, but the execution infrastructure isn’t.

A few more data points: 70% of leaders personally use AI, while only 25% of their organizations do. Which means that right now, in most businesses, the leader is experimenting quietly on their own laptop while the rest of the team carries on exactly as before.

(Now, between you and me, I can also tell you that LOTS of people are experimenting on their own, but the leaders don’t know it, and their colleagues don’t know it. It’s like a dirty little secret in some places.)

Individual adoption is lapping institutional adoption by a wide margin. And one statistic that surprises people most: 86% of our respondents feel genuinely positive about integrating AI. Curious, open-minded, optimistic. The fear narrative that dominated early AI conversations, it turns out, is mostly a myth, at least in this audience. The resistance isn’t the problem. The absence of a plan is.

Deloitte’s 2026 State of AI in the Enterprise report, which surveyed more than 3,200 business and IT leaders globally, lands in the same place. Worker access to AI has expanded by 50% in just one year, yet among those with access, only 60% use it in their daily workflow — a pattern that barely shifted from the previous year. Access is growing. Activation isn’t keeping pace.

Grab a calculator and let’s do the math on that together. More than a third of people who have the tool aren’t using it, and these are organizations actively engaged with AI — not the laggards. These folks are paying attention, but still leaving significant value sitting on the table.

(Heavy sigh.)

Why the Gap Exists

The access-activation gap, in my experience, doesn’t exist because employees are resistant. It exists because organizations consistently underestimate what adoption actually requires. As someone who’s been in the enterprise tech space for a looooong time, this isn’t really a new problem, but it is more pronounced now than it’s ever been.

I get it — it’s a very human, very understandable thing to get wrong. And it looks the same whether you’re a national property company or a two-person mortgage brokerage.

No recipe, just ingredients.

When there’s no guidance on what good AI use looks like for a specific role, most people don’t boldly invent the recipe. They open the tool, generate something that doesn’t quite land, and quietly return to what they already know how to do well. Not because they’re not capable, but because they’re busy, and they had no frame of reference for what they were aiming for.

No time to learn.

Developing new skills, especially ones that require experimentation and a reasonable tolerance for getting it wrong before you get it right, takes time that has to be deliberately set aside. If it isn’t built into the week, it gets sacrificed to everything else that is. This is as true for a team of 3,000 as it is for a team of one.

No permission to experiment.

If the culture doesn’t explicitly support trying things and occasionally producing something that makes you laugh at yourself, people default to safe. And safe, in the context of AI adoption, looks a lot like “I’ll wait until I know how to do this properly” — which is a sentence that never really finds its ending. My readiness data found that 56% of organizations provide zero AI training. None. So people aren’t just lacking permission to experiment, they’re lacking even the basic orientation to know where to start.

No modeling from the top.

When leaders aren’t visibly using AI, the organization reads that as a signal. Not in any memo, of course, just in the behavior everyone is quietly watching and interpreting. Deloitte found that top-down directives alone rarely drive meaningful change, and that high-performing AI implementations are built around empowered employees who experiment, share early wins, and become internal champions — supported by visible senior sponsorship. It’s grassroots energy and top-down modeling, working together.

• • •

Leading Out Loud: Get Ready to Show and Fail

So what does closing the gap actually look like in practice? Let me share something specific that I teach, because I think it’s the single most underrated move a leader can make right now. Plus, it’s gotten rave reviews from dozens of teams!

I call it Show and Fail.

(Get it? Show and Tell … is now Show and Fail … I really do crack myself up sometimes.)

The idea is simple. At a regular team meeting, the leader goes first. They share something they tried with AI that week: what they were attempting, what actually happened (especially the output that was, let’s say, creative in unintended ways), what they learned, and what they’d do differently. Then they invite others to do the same. I encourage a lot of laughing because, let’s be honest, some of the stuff that AI comes back with can REALLY miss the mark. One team I know actually gives a rotten tomato award to the worst AI output of the week.

That’s it. No formal training required. No workshop budget. No IT rollout.

What Show and Fail does is solve three problems at once. It gives people explicit permission to experiment and get things wrong, which turns out to be the thing most people were waiting for. It normalizes the learning curve, because when the leader is laughing about their own prompting disaster, suddenly nobody else’s feels like a professional liability. And it creates a running internal knowledge base of what’s actually working in your specific context, for your specific work, which no off-the-shelf training can replicate.

The key detail is that the leader goes first. Every time. This isn’t a “let’s all share” exercise where the leader sits back and moderates. It’s a leadership behavior, and it only works when the person at the front of the room is genuinely willing to be a beginner in public. In my experience, when leaders do this consistently, the team’s AI confidence shifts noticeably within a few weeks — not because of anything formally taught, but because the psychological permission has been granted from the top.

It also happens to be pretty fun. Every day with AI is a bit of an experiment. You might as well have some company.

Show and Fail only works if the person at the front of the room is willing to fail first.

The Other Pieces That Matter

Show and Fail is a behavior. It works best alongside a bit of structure, and this is where most organizations, of any size, have real gaps.

My readiness data found that 75% of organizations have no AI policies in place whatsoever. No guidance on which tools are approved, how data should be handled, or who makes the calls when something murky comes up. People are using the tools anyway, which is fine, but they’re doing it without guardrails, which is less fine. A solo realtor using AI to draft client emails doesn’t need a 40-page governance document. She does need to know what not to put into a public AI tool. That’s a ten-minute conversation that most organizations haven’t had.

Role-specific playbooks matter too. Not general AI awareness content, but practical, contextual guidance: what does great AI use look like for your specific job? What prompts tend to work? What does the output need to look like before it’s ready to use? The more specific, the more useful. Generic training builds literacy. Specific guidance changes what people actually do on a Tuesday.

And the 20-60-20 Collaboration Equation is worth understanding regardless of your organization’s size, because it gives people a model for their own contribution — which is usually the piece they were most uncertain about. The first 20% is yours: choosing the right tool, writing a real prompt, bringing the context AI needs to do good work. The 60% in the middle is AI’s job. The final 20% comes back to you: reviewing, validating, making the output sound like you and serve the actual purpose. Without this model, people either over-delegate (take the first output as final, get something generic, lose trust in the tool) or under-use entirely. Both are avoidable.

• • •

Your Homework

None of this requires a transformation project. I mean, a project probably would help a lot, but for today, you can make real progress with a few deliberate choices.

- Find out what’s actually happening. Do you know how many of your people are genuinely using AI regularly? Not your best guess — the actual number. It’s almost always a surprise, and it tells you exactly where to focus first.

- Try Show and Fail this week. Seriously, at your next team meeting. Share something you tried, including the part that went sideways. Go first. See what happens. And if you’re a solo operator, find a peer or a colleague and make it a standing agenda item. You don’t need a team for this — you can totally make progress with just a willing human.

- Have the ten-minute policy conversation. Even if it’s informal. What tools are we using? What shouldn’t go into a public AI tool? Who do we ask when we’re not sure? You don’t need a legal document. You need shared clarity.

- Give people time. Not a module. Protected, recurring time to experiment. Even 30 minutes a week changes the adoption curve, and it sends a message that this matters — which often matters more than the 30 minutes itself.

The property company on that call hadn’t failed at AI. They had done something genuinely difficult: secured buy-in, found the budget, and shipped the tools. They just hadn’t written the recipe yet. And that part, as it turns out, is the part that makes dinner actually happen.

Whether you’re running thousands of employees or a business of one, the gaps are remarkably similar. You need time to learn. You need permission to get it wrong. You need someone to go first. That someone might as well be you.

If you’re working through what this looks like for your organization or your team, I’d love to help. Drop me a note and let’s talk.

Frequently Asked Questions

What is the AI access-activation gap?

The AI access-activation gap is the space between giving people AI tools and actually getting those tools used consistently, confidently, and well. It’s the difference between an organization that has purchased licenses and one that has meaningfully changed how work gets done.

Data from Julie Holmes’ AI Readiness Assessment of 350+ business leaders found that 88% believe AI will significantly impact or transform their industry, yet only 25% are using it meaningfully across their organizations. Access is a procurement decision. Activation is a leadership and culture challenge, and the two require completely different approaches.

Why do employees have AI tools but not use them?

The most common reasons aren’t resistance or disinterest. Across hundreds of AI readiness assessments, the dominant feelings business leaders report about AI are curiosity, open-mindedness, and optimism. The barrier isn’t attitude. It’s the absence of guidance, training, time, and visible modeling from leadership.

When people are given a tool without a recipe, most of them don’t invent the recipe from scratch. They try once, get something underwhelming, and quietly return to what they already know. It’s a very human response to an underinvestment in context and support.

What is Show and Fail and how does it help AI adoption?

Show and Fail is a leadership practice where a leader shares, in a regular team meeting, something they tried with AI that week: what they were attempting, what didn’t work (with a laugh, because every day is a bit of an experiment), what did work, and what they learned. After going first themselves, they invite others to do the same.

The practice works because it solves three things at once: it gives explicit permission to experiment and get things wrong, it normalizes the learning curve across the team, and it creates a running internal library of what’s actually working in your specific context. The key is that the leader goes first, every time. When the person at the front of the room is genuinely willing to be a beginner in public, the team’s willingness to experiment follows.

Does AI adoption strategy look different for small businesses vs. large organizations?

The gaps are remarkably similar regardless of size. Whether you’re a solo realtor or the head of operations at a billion-dollar property company, the fundamentals are the same: you need time to learn, permission to experiment, some basic guidance on what good looks like, and someone willing to model the behavior first.

The structures may look different. A solo operator doesn’t need a formal governance committee. But they do need to know which tools are safe to use with client data, what a real prompt looks like versus a vague one, and that getting mediocre output the first time doesn’t mean AI isn’t for them. The recipe principle applies at every scale.

What is the 20-60-20 Collaboration Equation?

The 20-60-20 Collaboration Equation is a framework for working with AI as a genuine partner. The first 20% is human-led: choosing the right tool, writing a thoughtful prompt, bringing the context and intent that AI needs to do useful work. The middle 60% is AI’s job: generating, drafting, synthesizing, analyzing. The final 20% returns to the human: reviewing, validating, personalizing, making sure the output sounds like you and serves the real purpose.

Without this model, people tend toward one of two failure modes: over-delegating (taking AI’s first output as final, getting generic results, losing confidence in the tool) or under-using entirely (trying once, getting something mediocre, deciding AI isn’t worth the effort). The 20-60-20 frame gives people clarity on where their contribution sits, which turns out to be exactly what most people needed to know.

How do leaders close the gap between AI access and actual use?

Closing the gap requires a few things working together: a visible leadership practice like Show and Fail that models experimentation and grants permission to learn; basic AI principles that tell people what’s encouraged and what’s off-limits; role-specific playbooks that make good AI use concrete for each function; and protected time for experimentation built into the workweek rather than squeezed into the margins.

Deloitte’s research found that high-performing AI implementations are built around empowered employees supported by visible senior sponsorship — not top-down directives alone. The behavior modeling piece is not a soft add-on. It’s the mechanism that makes everything else stick.

How long does it take to see results from AI adoption?

Organizations that provide clear guidance, role-specific support, and visible leadership modeling tend to see meaningful behavior change within weeks. Those that distribute licenses and wait tend to wait considerably longer than expected.

Deloitte found that organizations designing for deployment from the outset, rather than treating scale as an afterthought, see far higher adoption, and that hands-on, role-specific training and visible executive advocacy materially shift employee behavior. The infrastructure work takes a little longer upfront. It pays off noticeably faster on the other side.

Why is Shadow AI a sign that your activation strategy isn’t working?

Shadow AI — employees using unapproved AI tools outside official channels — tends to grow when people are curious and motivated but haven’t been given a clear, supported path to use AI well. Across Julie Holmes’ AI readiness data, 8 in 10 organizations have a confirmed or suspected shadow AI problem, including many that have provided some AI training.

The instinct is often to respond with more restrictions. A more effective response is usually to close the activation gap: give people better tools, clearer guidance, and explicit permission to experiment. When the official path is a good one, the unofficial paths become far less necessary.